Introduction

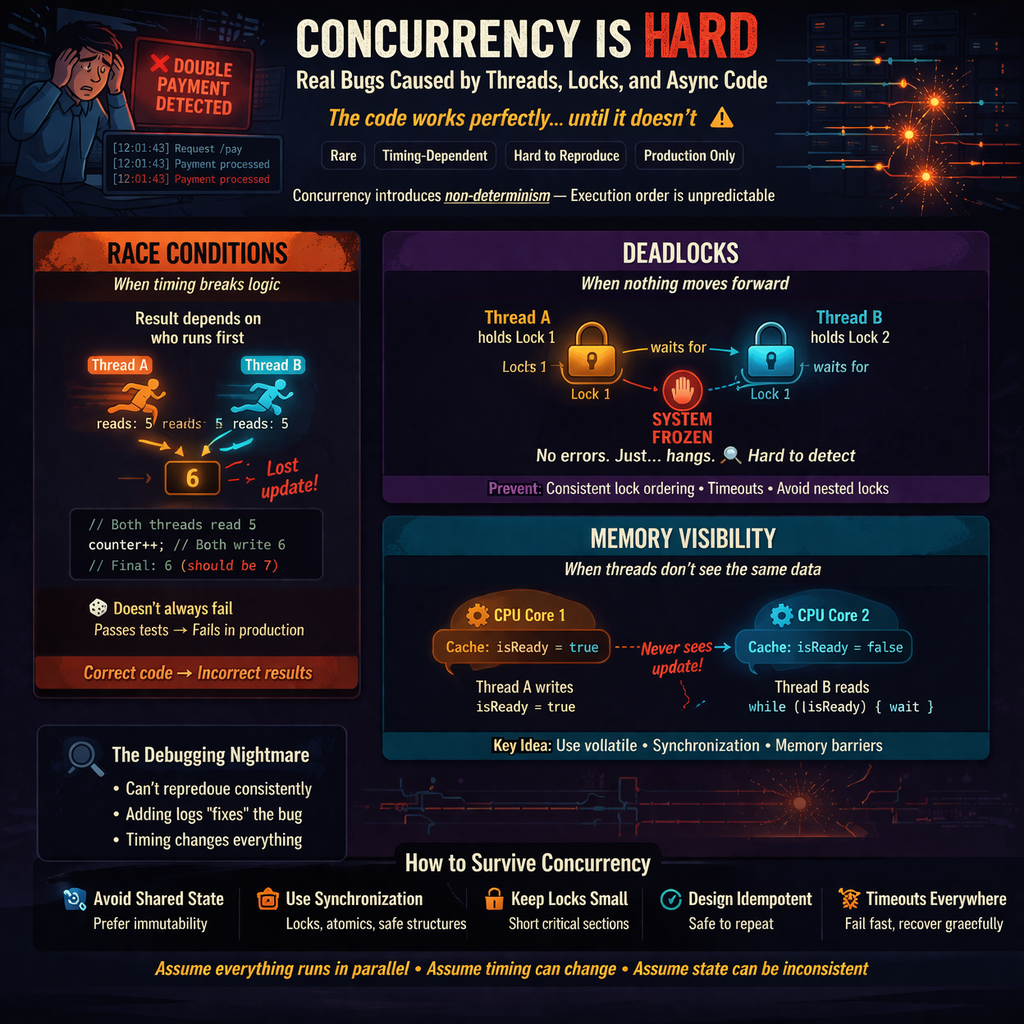

The code works perfectly… until it doesn’t.

You deploy. Everything looks stable. Metrics are green. Logs are clean.

Then one day:

- A user gets charged twice

- A counter suddenly jumps backward

- A service freezes with no errors

And when you try to reproduce it locally?

Nothing.

Welcome to the world of concurrency bugs.

These bugs are:

- Rare

- Timing-dependent

- Extremely hard to reproduce

- Even harder to debug

The real problem is this:

Concurrency introduces non-determinism.

Your code is no longer executed in a predictable order. The same logic can produce different results depending on timing.

In this post, we’ll walk through the most common concurrency bugs—not as theory, but as things that actually happen in production.

1. Why Concurrency Is Hard

At its core, concurrency means:

Multiple things are happening at the same time.

Sounds simple. It’s not.

When multiple threads (or async tasks) run simultaneously:

- You don’t control execution order

- You don’t control timing

- You don’t control interleaving

Your program becomes a system of possible outcomes, not a single predictable flow.

Analogy

Imagine multiple people editing the same Google Doc at the same time… but without real-time syncing.

One person deletes a paragraph. Another edits it. A third copies it.

Now ask yourself:

What does the final document look like?

That’s your backend system under concurrency.

2. Race Conditions: When Timing Breaks Logic

A race condition happens when:

The correctness of your program depends on the timing of execution.

Two threads access shared data. The final result depends on who runs first.

Simple Example

You have a shared counter:

- Thread A reads value = 5

- Thread B reads value = 5

- Thread A increments → 6

- Thread B increments → 6

Final value = 6, not 7.

You lost an update.

The Dangerous Part

Race conditions don’t fail consistently.

- 1000 requests → everything works

- 1 random request → incorrect result

Your tests pass. Your staging looks fine. Production breaks.

Classic Case: Check-Then-Act

if (balance > 0) { withdraw(); }Two threads check at the same time:

- Both see balance > 0

- Both withdraw

Now your system is in an impossible state.

Key Takeaway

Correct code can still produce incorrect results under concurrency.

3. Deadlocks: When Nothing Moves Forward

Deadlocks are simpler to understand—and more painful in production.

What Happens

- Thread A holds Lock 1, waiting for Lock 2

- Thread B holds Lock 2, waiting for Lock 1

Neither can proceed.

System is stuck.

Forever.

Real-World Symptoms

- Service hangs

- CPU usage drops

- No errors in logs

- Requests just… wait

Why It’s Hard

Deadlocks don’t throw exceptions.

They just silently stop progress.

Common Causes

- Nested locks

- Inconsistent lock ordering

- Complex dependency chains

Prevention (Conceptually)

- Always acquire locks in the same order

- Avoid holding multiple locks at once

- Use timeouts instead of waiting forever

Deadlocks are not about bad code.

They’re about bad coordination.

4. Memory Visibility Problems

This is where things get subtle.

Even if your logic is correct…

Threads might not see the same data.

Why?

Modern CPUs use caches.

Each thread may work with its own local copy of a variable.

So:

- Thread A updates a value

- Thread B keeps seeing the old value

Result?

Inconsistent behavior.

Example Scenario

A flag is updated:

isReady = true;Thread A sets it.

Thread B keeps reading:

while (!isReady) { // wait }And it never exits.

Why?

Because it never sees the update.

The Fix (Conceptually)

- Use

volatilevariables - Use proper synchronization

- Use memory barriers

Key Idea

Even if your code is logically correct, threads may not see the same data at the same time.

5. The Illusion of Safety in High-Level Code

Modern frameworks make concurrency feel easy.

You write:

- async/await

- promises

- background tasks

It looks clean.

But the complexity hasn’t disappeared.

It’s just hidden.

The Trap

You assume:

“This is async, so it’s safe.”

It’s not.

Example

Multiple async calls updating shared state:

- Request A updates cache

- Request B updates cache

- Order is unpredictable

Now your cache contains inconsistent data.

Another Case

Async error handling:

- One task fails silently

- Another task continues

- System ends up in a half-updated state

Reality

Concurrency bugs still exist.

You just don’t see the threads anymore.

6. Real-World Concurrency Failures

These are not hypothetical.

These are things that actually happen.

Double Payment

Two requests hit the payment service:

- Both check “payment not processed”

- Both process payment

User gets charged twice.

Cache Corruption

Multiple threads update a shared cache:

- One writes partial data

- Another overwrites it

Now your cache returns invalid responses.

Database Deadlocks

Two transactions:

- Transaction A locks row 1, wants row 2

- Transaction B locks row 2, wants row 1

Database kills one transaction.

User sees random failures.

Lost Updates in Distributed Systems

Two services update the same entity:

- Both read old value

- Both write updates

One update is lost.

No error. Just incorrect state.

7. Why These Bugs Are So Hard to Debug

Concurrency bugs don’t behave like normal bugs.

Problems

- They don’t happen consistently

- You can’t reproduce them easily

- Logs don’t always help

- Stack traces look normal

The Worst Part

Adding logs can change timing.

And suddenly…

The bug disappears.

You “fix” nothing—but it stops happening.

Why?

Because you changed execution order.

You didn’t solve the problem.

You hid it.

8. Practical Strategies to Handle Concurrency

You can’t avoid concurrency.

But you can reduce the damage.

Avoid Shared State When Possible

Stateless systems are easier to reason about.

Immutable data is your friend.

Use Proper Synchronization

- Locks

- Atomic operations

- Thread-safe data structures

Don’t “hope” it works.

Make it safe.

Keep Critical Sections Small

Hold locks for the shortest time possible.

Long locks = higher chance of contention and deadlocks.

Design for Idempotency

Make operations safe to repeat.

If a request runs twice, the result should still be correct.

Use Timeouts and Fail Safely

Never wait forever.

Fail fast. Recover gracefully.

9. Concurrency as a Systems Problem

Concurrency is not just about threads.

It shows up everywhere:

- Distributed systems

- Microservices

- Message queues

- Databases

Every time multiple things happen at once…

You have concurrency.

And as your system grows:

Concurrency problems multiply.

10. A Better Mental Model

If you remember one thing, remember this:

- Assume everything runs in parallel

- Assume execution order can change

- Assume state can be inconsistent

Don’t trust timing.

Design for chaos.

Think defensively.

Conclusion

Concurrency bugs are not obvious.

And they are not rare at scale.

They are:

- Subtle

- Dangerous

- Inevitable if ignored

You don’t “master” concurrency once and move on.

You constantly guard against it.

Because in the end:

Concurrency doesn’t break your code. It exposes the assumptions you didn’t know you were making.