Introduction

Every backend engineer has seen this pattern.

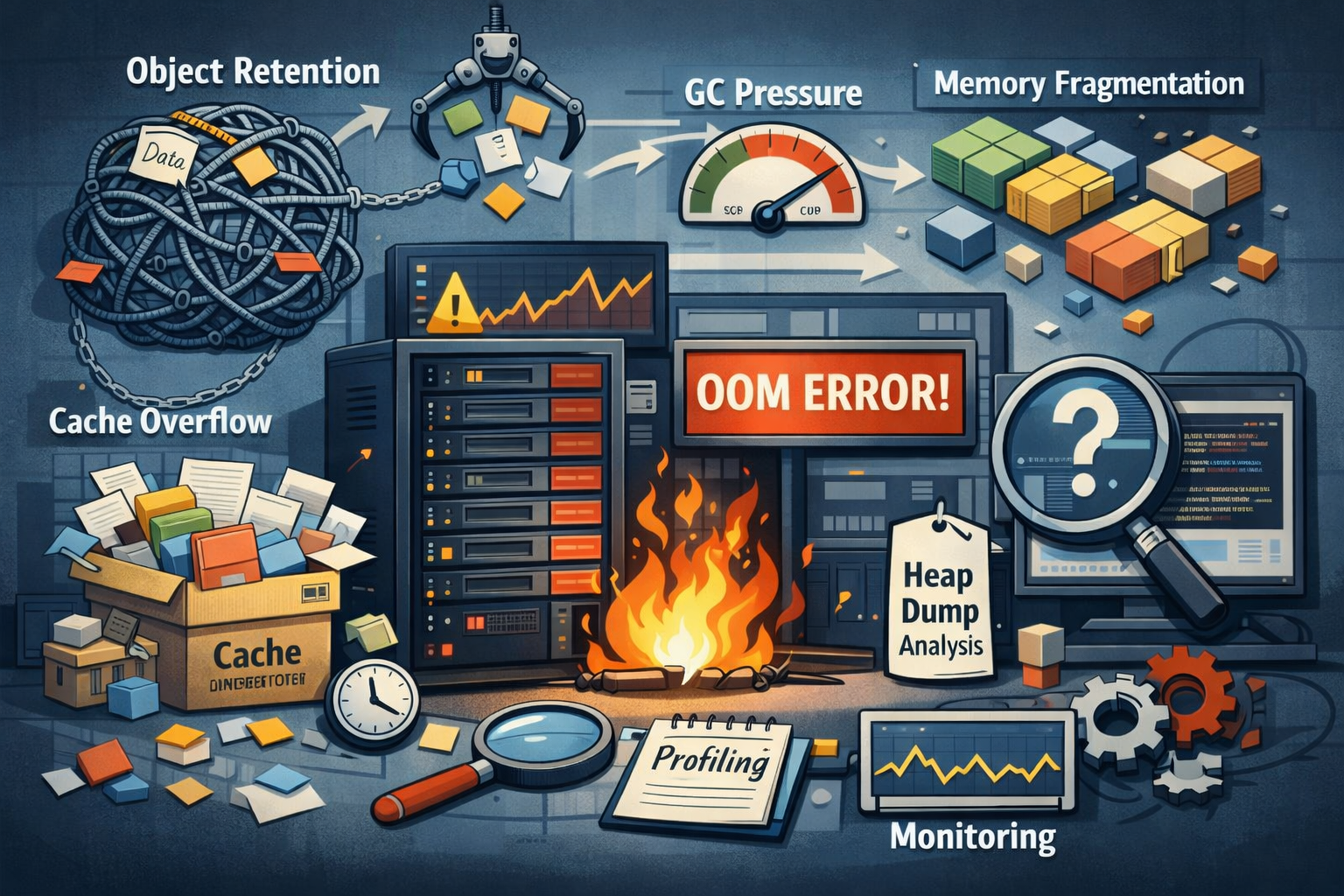

A service that has been running perfectly fine for weeks suddenly begins behaving strangely. Memory usage slowly climbs. CPU usage increases. Latency spikes start appearing in dashboards. Eventually the service crashes or becomes unstable.

The first instinct is almost universal.

"There must be a memory leak."

Logs are inspected. Deployments are blamed. Engineers start digging through recent code changes searching for the place where memory is being "forgotten".

But here is the twist.

Many real production memory problems happen without a traditional memory leak.

Garbage collection is working exactly as designed. The runtime is freeing unused memory correctly. Yet memory usage continues to grow, performance degrades, and the service becomes unstable.

This article is about those problems.

We'll walk through how modern backend systems experience memory instability even when there is no leak, and how to build a better mental model for understanding memory behavior in real systems.

1. The Traditional Idea of a Memory Leak

Before discussing modern systems, it's useful to understand what engineers historically meant by a memory leak.

In unmanaged languages like C or C++, developers manually allocate and free memory.

A simplified flow might look like:

allocate memory → use memory → free memoryIf a program allocates memory but forgets to free it, that memory stays allocated forever.

Over time:

- The application keeps consuming memory

- Available memory shrinks

- Eventually the program crashes

This is the classic definition of a memory leak.

To reduce these issues, many modern languages introduced managed runtimes with garbage collection, including:

- Java (JVM)

- Node.js (V8)

- Python

- Go

In these environments, the runtime automatically frees memory that is no longer used.

Developers do not explicitly free memory. Instead, the runtime tracks object references and removes objects that are no longer reachable.

Because of this, many developers assume something like:

Garbage collection means memory problems are mostly solved.

Unfortunately, this assumption is incomplete.

Garbage collection solves one category of memory problems, but many others still exist.

2. Garbage Collection Does Not Solve Everything

Garbage collectors work on a simple rule:

They free memory that is no longer reachable.

If no part of the application holds a reference to an object, the garbage collector can safely remove it.

Conceptually the runtime does something like this:

- Start from root references (threads, stacks, globals)

- Traverse all reachable objects

- Mark them as alive

- Reclaim everything else

This works extremely well for classic memory leaks.

However, it introduces a limitation.

The garbage collector cannot free objects that are still referenced.

Even if those objects are no longer useful.

This leads to a very common class of problems:

Objects remain reachable even though the application no longer needs them.

This is called object retention.

3. Object Retention: The Hidden Memory Problem

Object retention happens when objects stay referenced longer than intended.

Nothing is technically leaking. The garbage collector sees valid references and keeps those objects alive.

But from the application's perspective, that memory should have been released long ago.

A common example is a growing global collection.

For example:

Map<UserId, SessionData> sessionsIf new sessions are added but never removed, memory usage grows continuously.

The GC cannot clean this up because the map still references every session.

Another common scenario involves static references or long-lived services holding onto large data structures.

For example:

- A request object references a response

- The response references a large payload

- A logging system stores the request for debugging

Suddenly the entire payload remains in memory long after the request is complete.

Another subtle case appears with event listeners.

Listeners often capture surrounding variables through closures. If those listeners stay registered, the captured objects remain alive too.

The important concept here is reference chains.

If object A references B, and B references C, then C remains alive as long as A is alive.

Even if C is the only object actually consuming significant memory.

An analogy helps.

Imagine a warehouse storing boxes. Each box has a label referencing another box. Even if most boxes are no longer needed, the warehouse cannot discard them if a label still points to them.

The garbage collector behaves the same way.

4. GC Pressure: When the Garbage Collector Works Too Hard

Not all memory issues involve objects living too long.

Sometimes the problem is the opposite.

Objects die too quickly.

Modern backend services allocate huge numbers of temporary objects while handling requests.

Examples include:

- Parsing JSON

- Building response objects

- Database result transformations

- Serialization logic

Normally this is fine. Garbage collectors are optimized for short-lived objects.

But under heavy load, something changes.

The system allocates objects faster than the GC can comfortably reclaim them.

This creates GC pressure.

Instead of running occasionally, the garbage collector starts running constantly.

This leads to:

- Increased CPU usage

- Frequent GC cycles

- Short pauses during collection

- Latency spikes

From the outside, it may look like the system is under heavy computational load.

In reality, the runtime is spending a large portion of time cleaning up temporary objects.

Think of it like a restaurant kitchen.

If dishes arrive slowly, the dishwasher keeps up easily.

But if dishes arrive faster than they can be washed, the kitchen staff ends up spending all their time cleaning plates instead of cooking.

GC pressure creates the same situation.

5. The Cache That Slowly Kills Your System

One of the most common production memory issues comes from caching.

Caching is usually added with good intentions.

An expensive operation is repeated frequently, so someone stores the result in memory.

Performance improves immediately.

Everyone is happy.

But weeks later the service begins running out of memory.

The problem is usually simple.

The cache has no limits.

For example:

cache[key] = largeObjectWithout an eviction policy, the cache grows indefinitely as new keys appear.

Each entry might be valid. Nothing is technically wrong.

But eventually the cache becomes large enough to destabilize the system.

Cached objects are also often larger than expected.

They may contain:

- Large query results

- Nested objects

- Serialized payloads

A well-designed cache always includes constraints such as:

- LRU (Least Recently Used) eviction

- TTL (Time To Live) expiration

- Maximum size limits

The key insight is this:

A cache without limits is simply a delayed memory failure.

6. Memory Fragmentation and Large Object Allocation

Long-running services can also experience memory fragmentation.

Over time, memory becomes divided into many small blocks as objects are allocated and freed.

Imagine repeatedly allocating different-sized objects.

Eventually the memory space looks like this:

used | free | used | free | used | freeEven if total free memory is large, there may not be a large contiguous block available for a new allocation.

This leads to situations where:

- Memory appears available

- But large allocations fail

- Or the runtime expands the heap unnecessarily

Large objects such as buffers, arrays, or massive JSON payloads make this worse.

Many runtimes treat large objects differently from normal allocations, which can create additional pressure on the heap.

7. How Memory Problems Actually Appear in Production

Memory problems rarely appear suddenly.

Instead they show up gradually.

Typical symptoms include:

- Increasing memory usage over time

- GC pauses becoming longer

- CPU usage slowly rising

- Latency spikes during peak traffic

- Services restarting due to OOM errors

These issues are often misdiagnosed initially.

Teams may blame:

- database latency

- network instability

- traffic spikes

Only after examining memory metrics does the real issue become clear.

Memory problems tend to behave like slow-burning fires rather than sudden explosions.

8. Debugging Memory Issues in Real Systems

When dealing with memory issues, guessing rarely works.

Engineers must rely on measurement.

Useful tools include:

- memory profiling

- heap dumps

- allocation tracking

- observability dashboards

Heap dumps allow engineers to inspect what objects are occupying memory and how they are referenced.

Allocation tracking shows where the system creates the most objects.

These tools reveal patterns that are invisible in source code.

A useful principle to remember:

Memory issues rarely reveal themselves instantly.

They appear through patterns over time.

Understanding those patterns is the key to solving them.

9. Practical Strategies to Avoid These Problems

Design Bounded Systems

Any data structure that grows indefinitely will eventually cause problems.

Always limit in-memory structures.

Control Object Lifetimes

Objects should live only as long as necessary.

Avoid keeping request data in global structures.

Design Caches Carefully

Caches must include eviction strategies like:

- LRU

- TTL

- Size limits

Monitor Memory Early

Track metrics such as:

- heap usage

- GC frequency

- allocation rate

Memory trends often reveal problems long before outages occur.

10. Why Memory Problems Are a Systems Issue

Memory issues rarely come from a single bad line of code.

Instead they usually involve multiple factors interacting together:

- runtime behavior

- application architecture

- caching strategies

- traffic patterns

- workload characteristics

Solving these problems requires systems thinking.

Understanding how the application behaves under real load is far more valuable than memorizing garbage collector internals.

Conclusion

When engineers hear "memory problem", they often think of memory leaks.

But leaks are only one type of failure.

Many real-world outages happen because of:

- object retention

- cache misuse

- allocation churn

- GC pressure

These problems occur even when garbage collection is working perfectly.

The real lesson is simple:

Memory problems rarely come from forgetting to free memory.

They come from keeping things around longer than the system can afford.